You open three tabs. One has ChatGPT, one has Claude, one has Gemini. You copy-paste the same prompt into all three, compare the results, and still aren’t sure which one to trust with your actual work.

Sound familiar?

By mid-2025, the gap between the top AI models has narrowed dramatically — and that’s exactly what makes choosing harder, not easier. GPT-4o, Claude 4 Sonnet, and Gemini 2.5 Pro are all genuinely capable. But they’re not interchangeable. Each has a distinct personality, a different set of strengths, and a price tag that can vary by 10x or more depending on which you pick.

This article breaks down what each model actually does best, where it falls short, and — most importantly — how to stop guessing and start matching the right tool to the right task.

What’s Actually Different Right Now (Quick Catch-Up)

The current generation of flagship models arrived in rapid succession:

- May 2024 — OpenAI released GPT-4o, its fastest and most capable multimodal model at the time, with native vision, audio, and text in one architecture.

- October 2024 — Anthropic shipped Claude 3.5 Sonnet, which topped coding benchmarks and became the default for many developers. In early 2025, Claude 4 Sonnet followed, extending that lead with improved agentic capabilities and a larger output window.

- March 2025 — Google launched Gemini 2.5 Pro, a “thinking model” with dramatically improved reasoning and a 1-million-token context window out of the box.

Three models, three different bets on where AI is most useful. Let’s look at each one on its own terms.

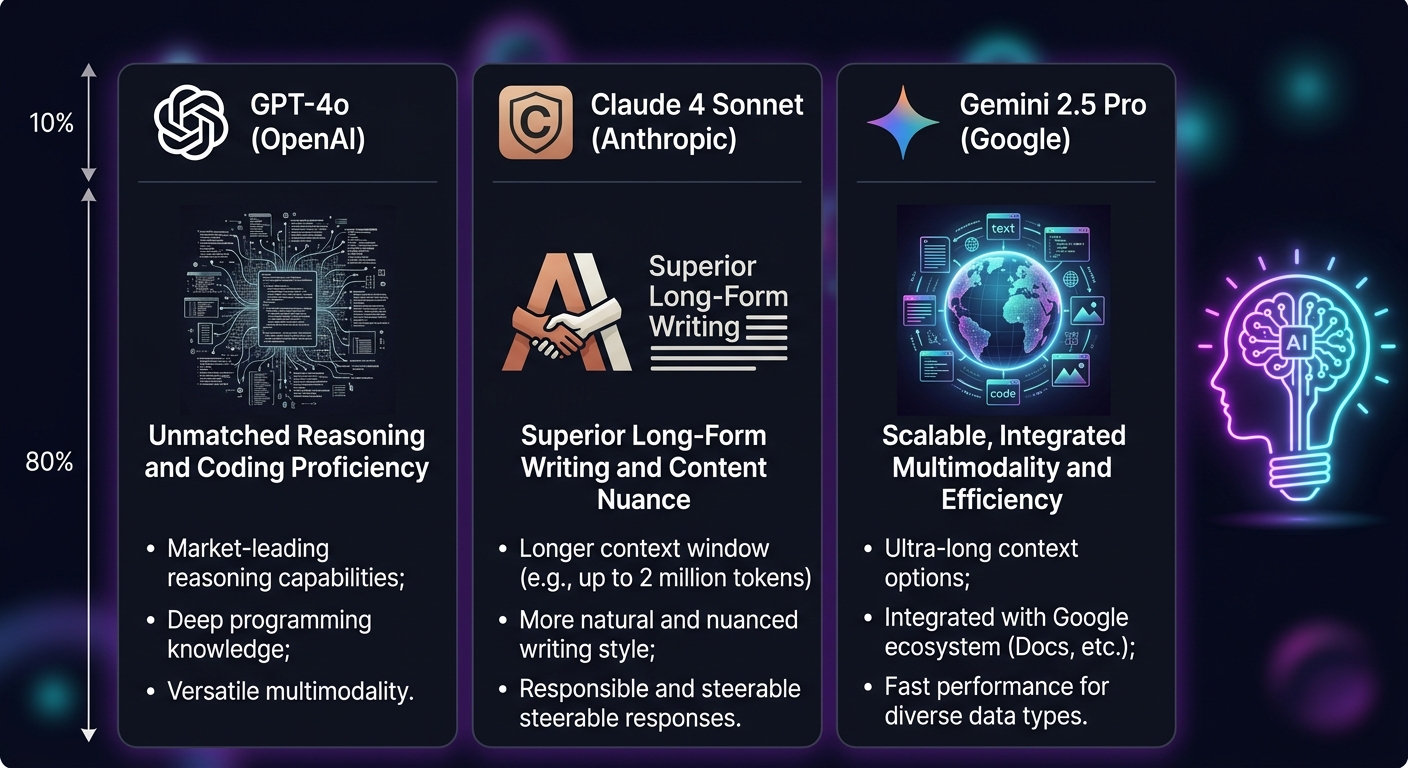

GPT-4o: Best for Multimodal Versatility and Ecosystem Integration

If you need one model that handles text, images, audio, and video inputs without breaking a sweat — and you want the deepest integration with third-party tools — GPT-4o remains the most broadly capable option.

GPT-4o was designed as a natively multimodal model: it doesn’t bolt image understanding onto a text model. It processes text, vision, and audio through a single architecture, which makes interactions feel faster and more coherent when you’re working across input types.

On the Chatbot Arena ELO leaderboard, GPT-4o consistently ranks in the top tier for general conversational quality. Its strength is breadth — it performs well across a wide range of tasks without a dramatic weakness in any single area.

Context window: 128K tokens input, 16K tokens output.

API pricing: $2.50 per million input tokens, $10.00 per million output tokens — the middle ground in the current market.

Where it shines:

– General-purpose assistant work where you need solid performance across diverse tasks

– Multimodal inputs — analyzing images, interpreting charts, working with audio

– Integration with the OpenAI ecosystem (custom GPTs, function calling, plugins)

– Fast response times for interactive use

Where it’s not the obvious choice:

– If you need the absolute highest code accuracy, Claude edges it out

– If you’re processing extremely long documents (500+ pages), Gemini’s 1M context window has a structural advantage

– Complex multi-step reasoning tasks where dedicated “thinking” models outperform

Claude 4 Sonnet: Best for Code and Deep Analysis

Claude 4 Sonnet currently holds a strong lead in software engineering benchmarks — and the gap is not small.

On SWE-bench Verified, the gold-standard benchmark for real-world software engineering tasks, Claude models have consistently scored at or near the top. Claude 4 Sonnet also introduced extended thinking capabilities that let it work through complex problems step by step, producing output that’s closer to what a senior developer would write — traceable, self-correcting, and well-structured.

Beyond coding, Claude’s strength is in long-form, nuanced work — legal analysis, research synthesis, complex document review. Its extended thinking approach means it doesn’t just output an answer; it works through the problem in a way that makes the output more auditable and trustworthy.

Context window: 200K tokens input, with a standout 128K token output limit — the largest single-response output of the three models by a wide margin. If you need Claude to produce a detailed 40,000-word document in one go, it can.

API pricing (Claude 4 Sonnet): $3.00 per million input tokens, $15.00 per million output tokens. For heavier tasks, Anthropic’s Opus tier runs significantly higher. Claude is generally the most expensive option at the top end.

Where it shines:

– Software development and code review

– Legal, financial, and technical document analysis

– Tasks that require long, detailed, carefully-reasoned output

– Situations where accuracy is worth paying a premium for

Where it’s not the obvious choice:

– High-volume, cost-sensitive workflows

– Processing video or very large multimodal datasets (Gemini handles this natively at scale)

– Simple, fast tasks where you’re paying a premium for capability you don’t need

Gemini 2.5 Pro: Best for Long-Context Reasoning at Scale

Gemini 2.5 Pro arrived in March 2025 with two things going for it: a dramatic jump in reasoning performance and a context window that dwarfs the competition.

Google describes Gemini 2.5 Pro as a “thinking model” — it can engage in extended internal reasoning before producing a response, similar to what OpenAI’s o-series and Claude’s extended thinking offer. On benchmarks like GPQA Diamond (PhD-level science questions) and AIME 2025 (competition mathematics), Gemini 2.5 Pro posted scores competitive with or ahead of the best available models at launch.

The 1 million token context window is standard, and it’s built with multimodal processing as a core feature — not an add-on. Feeding in a two-hour video, a 500-page PDF, and a dataset spreadsheet simultaneously is exactly the kind of workflow Gemini is designed for.

Pricing: At the API level, Gemini 2.5 Pro is significantly cheaper than Claude for comparable tasks — particularly for high-volume input processing, where the per-token cost difference compounds quickly.

Where it shines:

– Large-scale research involving video, images, audio, and documents

– Tasks requiring long-context reasoning across diverse input types

– Scenarios where you’re running many queries and cost is a real constraint

– Complex logical and mathematical reasoning

Where it’s not the obvious choice:

– Pure code generation (Claude holds the edge here)

– Short, conversational tasks where its thinking overhead isn’t fully utilized

– If you’re deeply integrated into the OpenAI ecosystem already

The Practical Decision Framework

Stop asking “which model is best?” Start asking “best for what?”

Here’s a simple way to think about it:

| Task | Best Model | Why |

|---|---|---|

| Writing and reviewing code | Claude 4 Sonnet | Top SWE-bench scores, best reasoning depth for code |

| Analyzing very large documents/video | Gemini 2.5 Pro | 1M native context, built-in multimodal processing |

| General chat and multimodal tasks | GPT-4o | Broadest capability, fastest responses |

| Budget-sensitive high-volume work | Gemini 2.5 Pro | Significantly cheaper per token than Claude |

| Longest single-response output | Claude 4 Sonnet | 128K output tokens per response |

| Complex math and science reasoning | Gemini 2.5 Pro | Strong AIME and GPQA scores in thinking mode |

| Ecosystem and plugin integration | GPT-4o | Deepest third-party tool support |

The honest answer is that most users don’t need to pick one and stick with it. You need access to all of them, and the ability to switch based on the task at hand.

That’s exactly the problem OximoAI was built to solve.

How OximoAI Gives You Access to All Three (Without the Subscription Overhead)

Getting direct access to GPT-4o, Claude 4 Sonnet, and Gemini 2.5 Pro individually means managing three separate subscriptions, three interfaces, and — if you’re outside the US — potentially three VPN configurations. At $20/month each, that’s $60/month before you’ve opened a single document.

OximoAI brings all the top models into one Telegram bot with pay-as-you-go pricing. No subscriptions, no monthly commitments, no VPN required. You pay for what you actually use.

Here’s what a concrete workflow looks like:

You’re a developer who needs to do three things today: debug a Python script, analyze a competitor’s 80-page annual report, and draft a LinkedIn post about the findings.

- Open @OximoAI_bot in Telegram

- Select Claude 4 Sonnet → paste your Python error → get a detailed fix with explanation in about 20 seconds

- Switch to Gemini 2.5 Pro → upload the PDF → ask “summarize the key financial risks in bullet points” → get a structured breakdown from 80 pages in under a minute

- Switch to GPT-4o → “Write a LinkedIn post based on this summary, professional tone, 150 words” → done

Three models, three tasks, one interface. No tab-switching, no copy-pasting between platforms, no “which account did I log into?”

Beyond text, OximoAI also handles image generation, text-to-speech with voice cloning, and AI agents with persistent memory — so the assistant you configure for your coding work actually remembers your stack and preferences across conversations.

New users start with 30 free coins — enough to run a meaningful test across multiple models — with no credit card required. Paid top-ups start from a small amount, and the coin system means you’re never locked into paying for a model tier you don’t use.

Stop Picking Favorites, Start Picking the Right Tool

The three flagship AI models have each carved out territory where they genuinely lead:

- GPT-4o owns multimodal versatility and ecosystem breadth

- Claude 4 Sonnet leads in code quality and deep analytical output

- Gemini 2.5 Pro wins on long-context reasoning and cost efficiency

The wrong move is committing to one and forcing every task through it. The right move is having fast, frictionless access to all three — and choosing based on what you’re actually trying to accomplish.

If you’re spending time and money managing multiple AI subscriptions, or you’ve been stuck using just one model because switching is too much friction, give OximoAI a try. Thirty coins are waiting for you the moment you press Start — no card, no commitment, no VPN.

→ Try it now: @OximoAI_bot